In mission-critical systems, whether powering servers, telecom infrastructure, medical devices, or aerospace platforms, reliability isn’t optional. A single power supply failure can result in downtime, data loss, or even life-threatening consequences. That’s why redundancy is a foundational principle in high-availability design.

Redundancy in power systems refers to the inclusion of backup power sources that can take over if the primary supply fails. However, not all redundancy strategies offer the same level of protection and efficiency. Engineers typically choose between hot redundancy, where multiple power supplies actively share the load, and cold redundancy, where backup units remain idle until a failure occurs.

This blog explores both strategies in depth, with a particular focus on their application in front-end power supplies. We’ll break down their architectural differences, analyze performance trade-offs, and highlight ideal use cases to help you determine the most suitable strategy for your design.

What Is Hot Redundancy in Front-End Power Supplies?

Hot redundancy, also known as active redundancy or N+1 power supply configuration, involves multiple power supplies operating simultaneously. Each unit contributes to the total load, and if one fails, the remaining units automatically compensate, ensuring uninterrupted power delivery.

How Hot Redundancy Works

In front-end power supply systems, hot redundancy is typically managed by load-sharing controllers. These intelligent circuits monitor current output and dynamically balance the load across all active units. This prevents any single power supply from being overburdened and ensures consistent voltage regulation.

If a unit fails, the system continues operating without interruption. The faulty supply can be hot-swapped, removed and replaced while the system remains live, making this strategy ideal for environments where downtime is not feasible.

Hot Redundancy Example: Data Center Power Supplies

Consider a cloud data center using 1U front-end AC-DC power supplies. These units are mounted in server racks and run in parallel. If one supply fails, the others instantly pick up the load. Technicians can replace the failed unit without shutting down the server, maintaining uptime and service continuity.

In addition to server racks, hot redundancy is increasingly used in edge computing nodes and AI inference clusters, where uninterrupted power is critical for real-time data processing. These applications benefit from the same load-sharing principles, ensuring consistent performance even as workloads fluctuate or hardware components are upgraded.

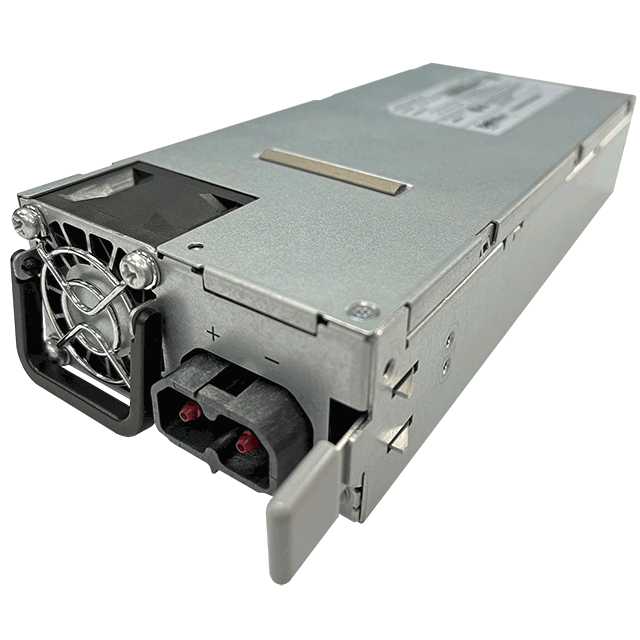

What Is Cold Redundancy in Power Supply Design?

Cold redundancy, also called standby redundancy, takes a more conservative approach. Only one power supply is active at a time, while the others remain off or in low-power standby mode. If the active unit fails, a backup supply powers on and takes over.

How Cold Redundancy Works

Cold redundancy relies on OR-ing circuits, typically implemented with FETs or relays. These components detect when the primary supply fails and redirect power flow to the backup unit. While there may be a brief switchover delay, the system resumes operation with minimal disruption.

In some designs, cold redundancy is paired with health monitoring circuits that periodically test backup units without activating them. Such proactive diagnostics are especially valuable in systems with long maintenance intervals or limited physical access.

The key advantage of cold redundancy is efficiency. Since only one unit is active at a time, energy consumption is lower, thermal output is reduced, and backup power supplies experience less wear, extending their operational life.

Cold Redundancy Example: Telecom Power Systems

A telecom base station in a remote location might use a 1.5 kW front-end power supply to operate normally. A second unit remains cold until needed. This setup conserves energy, reduces heat, and minimizes maintenance, all critical factors in remote or energy-conscious deployments.

| Feature | Hot Redundancy | Cold Redundancy |

|---|---|---|

| Load handling | Shared across PSUs | Single PSU active |

| Efficiency | Lower (all units run) | Higher (only one runs) |

| Reliability | Continuous, no switchover gap | Brief switchover delay |

| Stress on supplies | Evenly distributed | Concentrated on active unit |

| MTBF impact | Higher system uptime | Longer backup lifespan |

| Cost/complexity | Higher (needs load-sharing) | Lower (simpler) |

| Maintenance | Hot-swappable units | May require full shutdown |

| Use case | Data centers, servers, medical | Telecom, industrial, remote systems |

Table 1: Hot vs. Cold Redundancy - Comparing Power Supply Strategies

Real-World Applications of Redundancy in Front-End Power Supplies

Hot Redundancy in Data Centers

Modern data centers demand near-zero downtime. Multiple 2 kW front-end power supplies run in parallel to support high-density server racks. If one fails, the others maintain full operation. Hot swapping allows technicians to replace units without interrupting service, ensuring 24/7 availability.

Cold Redundancy in Telecom

Telecom towers, especially in remote or rural areas, prioritize energy efficiency and long-term reliability. A single active power supply handles normal operation, while a backup remains cold. This reduces power draw and extends component life, critical for minimizing maintenance visits and operating costs.

Hybrid/Warm Redundancy in Industrial Systems

Some industrial systems use a warm redundancy approach, where backup supplies remain in standby mode, partially powered but not actively supplying load. This enables faster switchover than cold redundancy while conserving more energy than hot redundancy. It’s a middle ground for applications that need both uptime and efficiency.

How to Choose Between Hot and Cold Redundancy for Your Power System

When selecting the right redundancy model for your front-end power supply, consider these key factors:

- Uptime Requirements

In systems that must remain live at all times, such as financial servers, hospital equipment, or aerospace controls, hot redundancy is the clear choice. It ensures seamless operation even during component failure. - Efficiency Goals

For systems where energy use is a concern, such as telecom towers or industrial control panels, cold redundancy offers significant savings. Only one unit draws power at a time, reducing heat and extending lifespan. - Cost and Complexity

Hot redundancy requires load-sharing circuitry, synchronized startup, and hot-swap capability, all of which add cost and design complexity. Cold redundancy is simpler and more cost-effective, especially for smaller or remote systems. - Space and Thermal Constraints

Hot redundancy means more active units, which can increase heat output and require additional cooling. Cold redundancy reduces thermal load and may allow for more compact designs. - Maintenance Strategy

Hot redundancy supports live maintenance and hot-swapping, ideal for systems that can’t afford downtime. Cold redundancy may require full shutdown for repairs but reduces wear on backup units.

Match your redundancy strategy to your system’s priorities. If uptime is paramount, go hot. If efficiency and simplicity matter more, go cold. Hybrid approaches may offer the best of both worlds.

Matching Redundancy Strategy to Your Power Supply Needs

Redundancy is essential for ensuring the reliability and resilience of front-end power supplies in mission-critical systems. Both hot and cold redundancy strategies have their strengths. Ultimately, the right choice depends on your system’s goals, whether that’s maximizing uptime, minimizing energy use, or balancing both. When selecting a front-end power supply, consider not just output specs, but also redundancy capabilities. The right choice can profoundly improve your system’s overall performance, energy efficiency, and long-term reliability.

Explore Bel Fuse’s front-end power supply portfolio featuring hot, cold, and hybrid redundancy options engineered for demanding environments.

.png)